Every now and then, I am asked which tracing, logging or monitoring solution you should use in a modern application, as the possibilities are getting more and more every month – at least, you may get that feeling. To be as flexible as possible and to rely on open standards, a closer look at OpenTelemetry is recommended. It becomes more and more popular, because it offers a vendor-agnostic solution to work with telemetry data in your services and send them to the backend(s) of your choice (Prometheus, Jaeger, etc.). Let’s have a look at how you can use OpenTelemetry custom metrics in an ASP.NET service in combination with the probably most popular monitoring stack in the cloud native space: Prometheus / Grafana.

TL;DR

You can find the demo project on GitHub. It uses a local Kubernetes cluster (kind) to setup the environment and deploys a demo application that generates some sample metrics. Those metrics are sent to an OTEL collector which serves as a Prometheus metrics endpoint. In the end, the metrics are scraped by Prometheus and displayed in a Grafana dashboard/chart.

OpenTelemetry – What is it and why should you care?

OpenTelemetry (OTEL) is an open-source CNCF project that aims to provide a vendor-agnostic solution in generating, collecting and handling telemetry data of your infrastructure and services. It is able to receive, process, and export traces, logs, and metrics to different backends like Prometheus , Jaeger or other commercial SaaS offering without the need for your application to have a dependency on those solutions. While OTEL itself doesn’t provide a backend or even analytics capabilities, it serves as the “central monitoring component” and knows how to send the data received to different backends by using so-called “exporters”.

So why should you even care? In today’s world of distributed systems and microservices architectures where developers can release software and services faster and more independently, observability becomes one of the most important features in your environment. Visibility into systems is crucial for the success of your application as it helps you in scaling components, finding bugs and misconfigurations etc.

If you haven’t decided what monitoring or tracing solution you are going to use for your next application, have a look at OpenTelemetry. It gives you the freedom to try out different monitoring solutions or even replace your preferred one later in production.

OpenTelemetry Components

OpenTelemetry currently consists of several components like the cross-language specification (APIs/SDKs and the OpenTelemetry Protocol OTLP) for instrumentation and tools to receive, process/transform and export telemetry data. The SDKs are available in several popular languages like Java, C++, C#, Go etc. You can find the complete list of supported languages here.

Additionally, there is a component called the “OpenTelemetry Collector” which is a vendor-agnostic proxy that receives telemetry data from different sources and can transform that data before sending it to the desired backend solution.

Let’s have a closer look at the components of the collector…receivers, processors and exporters:

- Receivers – A receiver in OpenTelemetry is the component that is responsible for getting data into a collector. It can be used in a push- or pull-based approach. It can support the OLTP protocol or even scrape a Prometheus /metrics endpoint

- Processor – Processors are components that let you batch-process, sample, transform and/or enrich your telemetry data that is being received by the collector before handing it over to an exporter. You can add or remove attributes, like for example “personally identifiable information” (PII) or filter data based on regular expressions. A processor is an optional component in a collector pipeline.

- Exporter – An exporter is responsible for sending data to a backend solution like Prometheus, Azure Monitor, DataDog, Splunk etc.

In the end, it comes down to configuring the collector service with receivers, (optionally) processors and exporters to form a fully functional collector pipeline – official documentation can be found here. The configuration for the demo here is as follows:

receivers:

otlp:

protocols:

http:

grpc:

processors:

batch:

exporters:

logging:

loglevel: debug

prometheus:

endpoint: "0.0.0.0:8889"

service:

pipelines:

metrics:

receivers: [otlp]

processors: [batch]

exporters: [logging, prometheus]

The configuration consists of:

- one OpenTelemetry Protocol (OTLP) receiver, enabled for http and gRPC communication

- one processor that is batching the telemetry data with default values (like e.g. a

timeoutof 200ms) - two exporters piping the data to the console (

logging) and exposing a Prometheus/metricsendpoint on0.0.0.0:8889(remote-write is also possible)

ASP.NET OpenTelemetry

To demonstrate how to send custom metrics from an ASP.NET application to Prometheus via OpenTelemetry, we first need a service that is exposing those metrics. In this demo, we simply create two custom metrics called otel.demo.metric.gauge1 and otel.demo.metric.gauge2 that will be sent to the console (AddConsoleExporter()) and via the OTLP protocol to a collector service (AddOtlpExporter()) that we’ll introduce later on. The application uses the ASP.NET Minimal API and the code is more or less self-explanatory:

using System.Diagnostics.Metrics;

using OpenTelemetry.Resources;

using OpenTelemetry.Metrics;

var builder = WebApplication.CreateBuilder(args);

builder.Services.AddOpenTelemetryMetrics(metricsProvider =>

{

metricsProvider

.AddConsoleExporter()

.AddOtlpExporter()

.AddMeter("otel.demo.metric")

.SetResourceBuilder(ResourceBuilder.CreateDefault()

.AddService(serviceName: "otel.demo", serviceVersion: "0.0.1")

);

});

var app = builder.Build();

var otel_metric = new Meter("otel.demo.metric", "0.0.1");

var randomNum = new Random();

// Create two metrics

var obs_gauge1 = otel_metric.CreateObservableGauge<int>("otel.demo.metric.gauge1", () =>

{

return randomNum.Next(10, 80);

});

var obs_gauge2 = otel_metric.CreateObservableGauge<double>("otel.demo.metric.gauge2", () =>

{

return randomNum.NextDouble();

});

app.MapGet("/otelmetric", () =>

{

return "Hello, Otel-Metric!";

});

app.Run();

We are currently dealing with custom metrics. Of course, ASP.NET also provides out-of-the-box metrics that you can utilize. Just use the ASP.NET instrumentation feature by adding AddAspNetCoreInstrumentation() when configuring the metrics provider – more on that here.

Demo

Time to connect the dots. First, let’s create a Kubernetes cluster using kind where we can publish the demo service, spin-up the OTEL collector instance and run a Prometheus/Grafana environment. If you want to follow along the tutorial, clone the repo from https://github.com/cdennig/otel-demo and switch to the otel-demo directory.

Create a local Kubernetes Cluster

To create a kind cluster that is able to host a Prometheus environment, execute:

$ kind create cluster --name demo-cluster \

--config ./kind/kind-cluster.yaml

The YAML configuration file (./kind/kind-cluster.yaml) adjusts some settings of the Kubernetes control plane so that Prometheus is able to scrape the endpoints of the controller services. Next, create the OpenTelemetry Collector instance.

OTEL Collector

In the manifests directory, you’ll find two Kubernetes manifests. One is containing the configuration for the collector (otel-collector.yaml). It includes the ConfigMap for the collector configuration (which will be mounted as a volume to the collector container), the deployment of the collector itself and a service exposing the ports for data ingestion (4318 for http and 4317 for gRPC) and the metrics endpoint (8889) that will be scraped later on by Prometheus. It looks as follows:

apiVersion: v1

kind: ConfigMap

metadata:

name: otel-collector-config

data:

otel-collector-config: |-

receivers:

otlp:

protocols:

http:

grpc:

exporters:

logging:

loglevel: debug

prometheus:

endpoint: "0.0.0.0:8889"

processors:

batch:

service:

pipelines:

metrics:

receivers: [otlp]

processors: [batch]

exporters: [logging, prometheus]

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: otel-collector

labels:

app: otel-collector

spec:

replicas: 1

selector:

matchLabels:

app: otel-collector

template:

metadata:

labels:

app: otel-collector

spec:

containers:

- name: collector

image: otel/opentelemetry-collector:latest

args:

- --config=/etc/otelconf/otel-collector-config.yaml

ports:

- name: otel-http

containerPort: 4318

- name: otel-grpc

containerPort: 4317

- name: prom-metrics

containerPort: 8889

volumeMounts:

- name: otel-config

mountPath: /etc/otelconf

volumes:

- name: otel-config

configMap:

name: otel-collector-config

items:

- key: otel-collector-config

path: otel-collector-config.yaml

---

apiVersion: v1

kind: Service

metadata:

name: otel-collector

labels:

app: otel-collector

spec:

type: ClusterIP

ports:

- name: otel-http

port: 4318

protocol: TCP

targetPort: 4318

- name: otel-grpc

port: 4317

protocol: TCP

targetPort: 4317

- name: prom-metrics

port: 8889

protocol: TCP

targetPort: prom-metrics

selector:

app: otel-collector

Let’s apply the manifest.

$ kubectl apply -f ./manifests/otel-collector.yaml

configmap/otel-collector-config created

deployment.apps/otel-collector created

service/otel-collector created

Check that everything runs as expected:

$ kubectl get pods,deployments,services,endpoints

NAME READY STATUS RESTARTS AGE

pod/otel-collector-5cd54c49b4-gdk9f 1/1 Running 0 5m13s

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/otel-collector 1/1 1 1 5m13s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 22m

service/otel-collector ClusterIP 10.96.194.28 <none> 4318/TCP,4317/TCP,8889/TCP 5m13s

NAME ENDPOINTS AGE

endpoints/kubernetes 172.19.0.9:6443 22m

endpoints/otel-collector 10.244.1.2:8889,10.244.1.2:4318,10.244.1.2:4317 5m13s

Now that the OpenTelemetry infrastructure is in place, let’s add the workload exposing the custom metrics.

ASP.NET Workload

The demo application has been containerized and published to the GitHub container registry for your convenience. So to add the workload to your cluster, simply apply the ./manifests/otel-demo-workload.yaml that contains the Deployment manifest and adds two environment variables to configure the OTEL collector endpoint and the OTLP protocol to use – in this case gRPC.

Here’s the relevant part:

spec:

containers:

- image: ghcr.io/cdennig/otel-demo:1.0

name: otel-demo

env:

- name: OTEL_EXPORTER_OTLP_ENDPOINT

value: "http://otel-collector.default.svc.cluster.local:4317"

- name: OTEL_EXPORTER_OTLP_PROTOCOL

value: "grpc"

Apply the manifest now.

$ kubectl apply -f ./manifests/otel-demo-workload.yaml

Remember that the application also logs to the console. Let’s query the logs of the ASP.NET service (note that the podname will differ in your environment).

$ kubectl logs po/otel-workload-69cc89d456-9zfs7

info: Microsoft.Hosting.Lifetime[14]

Now listening on: http://[::]:80

info: Microsoft.Hosting.Lifetime[0]

Application started. Press Ctrl+C to shut down.

info: Microsoft.Hosting.Lifetime[0]

Hosting environment: Production

info: Microsoft.Hosting.Lifetime[0]

Content root path: /app/

Resource associated with Metric:

service.name: otel.demo

service.version: 0.0.1

service.instance.id: b84c78be-49df-42fa-bd09-0ad13481d826

Export otel.demo.metric.gauge1, Meter: otel.demo.metric/0.0.1

(2022-08-20T11:40:41.4260064Z, 2022-08-20T11:40:51.3451557Z] LongGauge

Value: 10

Export otel.demo.metric.gauge2, Meter: otel.demo.metric/0.0.1

(2022-08-20T11:40:41.4274763Z, 2022-08-20T11:40:51.3451863Z] DoubleGauge

Value: 0.8778815716262417

Export otel.demo.metric.gauge1, Meter: otel.demo.metric/0.0.1

(2022-08-20T11:40:41.4260064Z, 2022-08-20T11:41:01.3387999Z] LongGauge

Value: 19

Export otel.demo.metric.gauge2, Meter: otel.demo.metric/0.0.1

(2022-08-20T11:40:41.4274763Z, 2022-08-20T11:41:01.3388003Z] DoubleGauge

Value: 0.35409627617124295

Also, let’s check if the data will be sent to the collector. Remember it exposes its /metrics endpoint on 0.0.0.0:8889/metrics. Let’s query it by port-forwarding the service to our local machine.

$ kubectl port-forward svc/otel-collector 8889:8889

Forwarding from 127.0.0.1:8889 -> 8889

Forwarding from [::1]:8889 -> 8889

# in a different session, curl the endpoint

$ curl http://localhost:8889/metrics

# HELP otel_demo_metric_gauge1

# TYPE otel_demo_metric_gauge1 gauge

otel_demo_metric_gauge1{instance="b84c78be-49df-42fa-bd09-0ad13481d826",job="otel.demo"} 37

# HELP otel_demo_metric_gauge2

# TYPE otel_demo_metric_gauge2 gauge

otel_demo_metric_gauge2{instance="b84c78be-49df-42fa-bd09-0ad13481d826",job="otel.demo"} 0.45433988869946285

Great, both components – the metric producer and the collector – are working as expected. Now, let’s spin up the Prometheus/Grafana environment, add the service monitor to scrape the /metrics endpoint and create the Grafana dashboard for it.

Add Kube-Prometheus-Stack

Easiest way to add the Prometheus/Grafana stack to your Kubernetes cluster is to use the kube-prometheus-stack Helm chart. We will use a custom values.yaml file to automatically add the static Prometheus target for the OTEL collector called demo/otel-collector (kubeEtc config is only needed in the kind environment):

kubeEtcd:

service:

targetPort: 2381

prometheus:

prometheusSpec:

additionalScrapeConfigs:

- job_name: "demo/otel-collector"

static_configs:

- targets: ["otel-collector.default.svc.cluster.local:8889"]

Now, add the helm chart to your cluster by executing:

$ helm upgrade --install --wait --timeout 15m \

--namespace monitoring --create-namespace \

--repo https://prometheus-community.github.io/helm-charts \

kube-prometheus-stack kube-prometheus-stack --values ./prom-grafana/values.yaml

Release "kube-prometheus-stack" does not exist. Installing it now.

NAME: kube-prometheus-stack

LAST DEPLOYED: Mon Aug 22 13:53:58 2022

NAMESPACE: monitoring

STATUS: deployed

REVISION: 1

NOTES:

kube-prometheus-stack has been installed. Check its status by running:

kubectl --namespace monitoring get pods -l "release=kube-prometheus-stack"

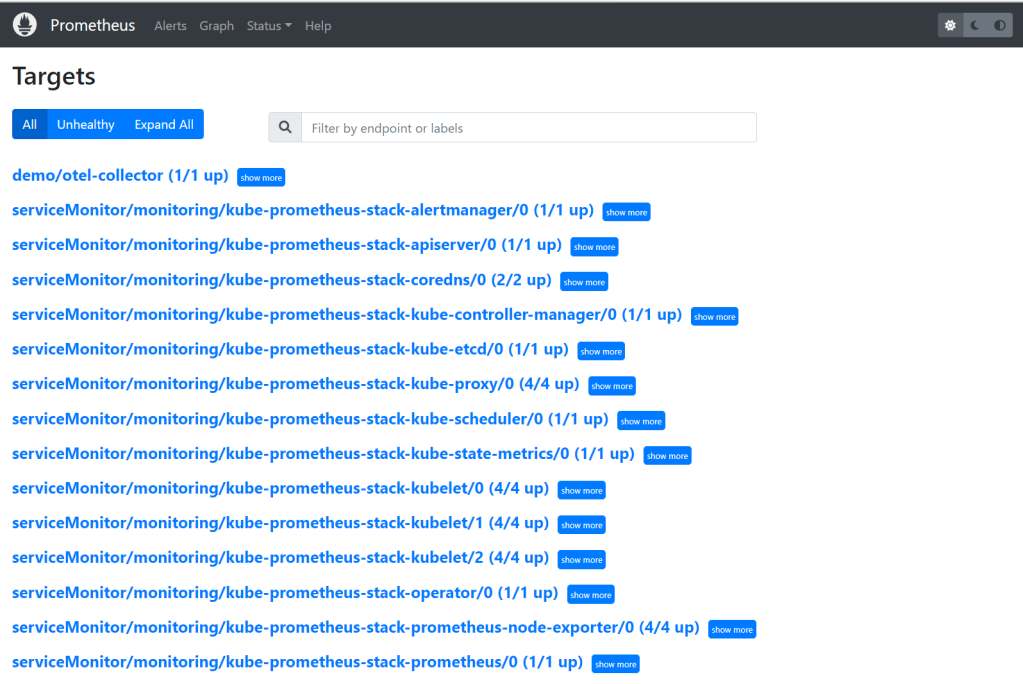

Let’s have a look at the Prometheus targets, if Prometheus can scrape the OTEL collector endpoint – again, port-forward the service to your local machine and open a browser at http://localhost:9090/targets.

$ kubectl port-forward -n monitoring svc/kube-prometheus-stack-prometheus 9090:9090

That looks as expected, now over to Grafana and create a dashboard to display the custom metrics. As done before, port-forward the Grafana service to your local machine and open a browser at http://localhost:3000. Because you need a username/password combination to login to Grafana, we first need to grab that information from a Kubernetes secret:

# Grafana admin username

$ kubectl get secret -n monitoring kube-prometheus-stack-grafana -o jsonpath='{.data.admin-user}' | base64 --decode

# Grafana password

$ kubectl get secret -n monitoring kube-prometheus-stack-grafana -o jsonpath='{.data.admin-password}' | base64 --decode

# port-forward Grafana service

$ kubectl port-forward -n monitoring svc/kube-prometheus-stack-grafana 3000:80

Forwarding from 127.0.0.1:3000 -> 3000

Forwarding from [::1]:3000 -> 3000

After opening a browser at http://localhost:3000 and a successful login, you should be greeted by the Grafana welcome page.

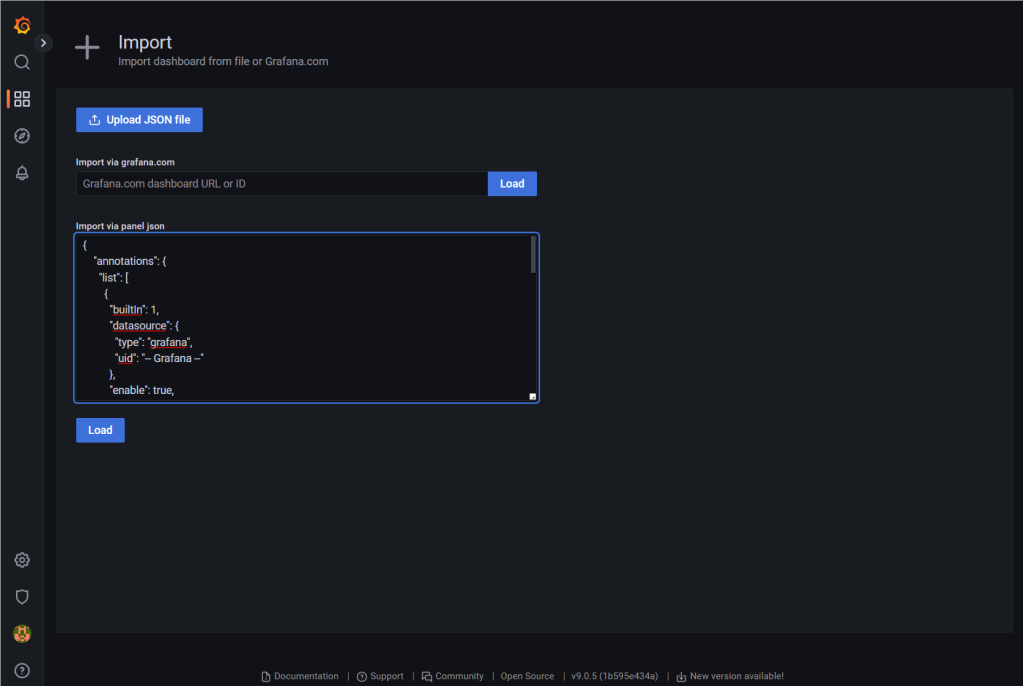

Add a Dashboard for the Custom Metrics

Head to http://localhost:3000/dashboard/import and upload the precreated dashboard from ./prom-grafana/dashboard.json (or simply paste its content to the textbox). After importing the definition, you should be redirected to the dashboard and see our custom metrics being displayed.

Wrap-Up

This demo showed how to use OpenTelemetry custom metrics in an ASP.NET service, sending telemetry data to an OTEL collector instance that is being scraped by a Prometheus instance. To close the loop, those custom metrics are eventually displayed in a Grafana dashboard. The advantage of this solution is that you use a common solution like OpenTelemetry to generate and collect metrics. To which service the data is finally sent and which solution is used to analyze the data can be easily exchanged via OTEL exporter configuration – if you don’t want to use Prometheus, you simply adapt the OTEL pipeline and export the telemetry data to e.g. Azure Monitor or DataDog, Splunk etc.

I hope the demo has given you a good introduction to the world of OpenTelemetry. Happy coding! 🙂

I can’t tell you how happy I am to find this post. I have spent way too long trying to get all of the puzzle pieces to fit together and it looks like you’ve done almost exactly what I need! I don’t have time to implement it tonight, but I’m super excited. I might even pull your repo just to watch it all finally work together before I do the work in my environment.

Getting this to work is super rad, don’t you think?

LikeLike

Glad to hear the article is useful to you. I was also looking for a long time how everything fits together and found nothing. That’s why – after my “journey” – I wrote the blog post.

LikeLike

Hey Christian, awesome detailed post! Just wondering, since I’m not yet so versed in Kubernetes, how would one change this setup to one where you would only have the ASP.NET together with a sidecar OpenTelemetry Collector? So the OTEL would handle the instrumentation of the ASP.NET but does not need to be front facing and would use it’s exporters to push data out to other envrionments.

LikeLike

Thank you very much for the feedback. Basically, it would come down to adjusting the OTEL_EXPORTER_OTLP_ENDPOINT to point to the “localhost” endpoint of the sidecar – sidecars can be called via “localhost” from the “application” container within a pod, because they share the network/pod ip. I have not tried myself so far, but that’s how I would start the journey 🙂

LikeLike